AI content detection: boost your local business visibility

Most Canadian service business owners assume that using AI to write their website content is a guaranteed way to get penalised by search engines. That fear is understandable, but it is not quite accurate. AI content detection is far more nuanced than a simple pass-or-fail test, and the real risk is not using AI at all — it is using AI badly. When you understand how detection actually works, you can use it to your advantage, producing content that is efficient to create, locally relevant, and trusted by both search engines and the AI tools that are increasingly driving customer referrals across Canada.

Table of Contents

- What is AI content detection and how does it work?

- Detection methods and their limitations

- Practical strategies for Canadian businesses

- AI content detection and SEO: what Canadian businesses need to know

- Why blending AI with human expertise is a Canadian advantage

- Take the next step: AI-powered visibility for your business

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Detection isn’t foolproof | AI detectors only flag content as likely machine-written and must be used alongside human review. |

| Local expertise is key | Combining AI drafts with local insights and human edits is the best way to boost SEO in Canada. |

| SEO rewards authenticity | Search engines favour rich, locally authoritative content—real stories and expertise matter most. |

| Diverse signals resist detection | Mixing testimonials, regional data, and E-E-A-T signals keeps content trustworthy and visible. |

What is AI content detection and how does it work?

Now that we have introduced the concept, let’s break down precisely how AI content detection works and why it matters for your business.

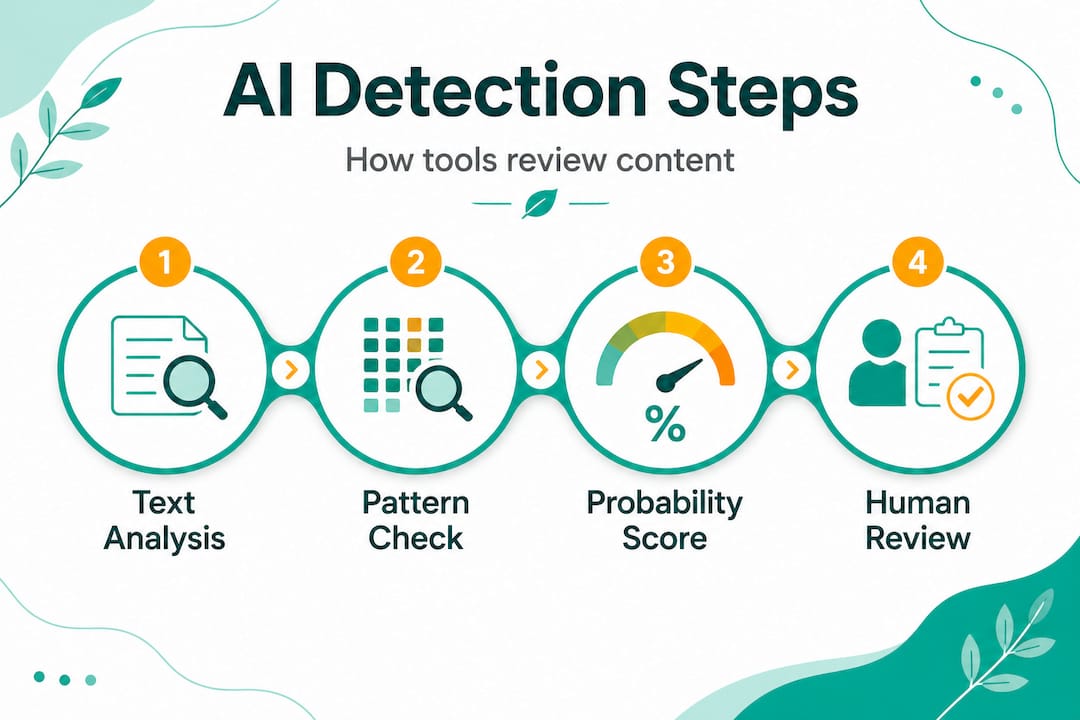

At its core, AI content detection is a screening process. It does not read your content the way a customer does. Instead, it analyses statistical patterns in the text to estimate the probability that a machine wrote it. AI content detection uses machine learning classifiers trained on both human and AI-generated text to identify patterns like low perplexity (predictable word sequences), low burstiness (uniform sentence lengths), repetition, and stylistic fingerprints.

Let’s unpack those terms quickly:

- Perplexity measures how predictable a word sequence is. AI models tend to choose the most statistically likely next word, producing low-perplexity text. Humans are less predictable — we use slang, regional phrases, and unexpected word choices.

- Burstiness refers to sentence length variation. Human writers naturally mix short punchy sentences with longer, more complex ones. AI tools often produce text with suspiciously uniform sentence lengths.

- Stylistic fingerprints are recurring patterns in vocabulary, transition phrases, and structure that certain AI models favour consistently.

These detection tools use probabilistic detection methods rather than definitive judgements. That means a piece of content is never simply labelled “AI” or “human” — it is assigned a probability score. This is a crucial point. Detection is a spectrum, not a verdict.

Here is a quick overview of what detectors look for:

| Signal | What it means | Risk level |

|---|---|---|

| Low perplexity | Overly predictable word choices | High |

| Low burstiness | Uniform sentence lengths | High |

| Repetitive phrasing | Same transitions used repeatedly | Medium |

| Missing local context | No regional references or specifics | Medium |

| Generic structure | Cookie-cutter intros and conclusions | Medium |

For a plumber in Winnipeg or a landscaper in Victoria, this matters because AI content detection and home services are increasingly intertwined. If your service pages read like they were produced by a template, they may get flagged — not because you used AI, but because the content lacks the signals of genuine expertise.

“Detection is probabilistic and imperfect. Think of it as a screening tool, not a courtroom judge. The goal is to identify patterns, not to punish every business that uses technology to work smarter.”

Detection methods and their limitations

With the basics covered, it is crucial to understand both the diversity of detection methods and their real-world limitations.

Detection is not a single technology. It is a collection of approaches, each with its own strengths and blind spots. Additional methods include statistical watermarking embedded by AI providers, model fingerprinting, and metadata analysis, though watermarks are vulnerable to paraphrasing.

Here is how the main methods compare:

| Method | How it works | Key limitation |

|---|---|---|

| Machine learning classifiers | Trained on large datasets of human and AI text | Can misclassify human writing as AI |

| Statistical watermarking | Embeds invisible patterns in AI output | Easily removed through paraphrasing or editing |

| Model fingerprinting | Identifies patterns unique to specific AI models | Quickly outdated as models update |

| Metadata analysis | Examines document creation data | Easily spoofed or stripped |

The watermarking limitation is worth dwelling on. Some AI providers embed invisible statistical patterns into their outputs to make detection easier. The problem is that any meaningful editing — adding a local example, restructuring a paragraph, inserting a customer quote — disrupts those patterns. This means that a well-edited piece of AI-assisted content will often pass watermark detection entirely. That is good news for business owners who are doing things right.

There is another limitation that does not get discussed enough: detection tools can be biased against non-native English writers. Studies have shown that text written by people whose first language is not English often scores higher on AI detection scales, simply because their writing style differs from the patterns the classifiers were trained on. For Canadian businesses serving diverse communities, or for owners who are themselves multilingual, this is a real concern.

The practical takeaway from all of this is straightforward:

- Detection tools are imperfect and probabilistic

- Editing and localising AI-drafted content disrupts detection signals

- Watermarks are not foolproof

- Bias in detection tools means human review is always essential

Thinking about how you structure your content matters too. For example, schema markup and detection are connected in ways many business owners miss — structured data signals to search engines that your content is organised, authoritative, and locally relevant, which complements your efforts to produce trustworthy content. Similarly, building a presence on community platforms supports your content strategy, and understanding Reddit for AI visibility can give your business an additional layer of credibility in AI-generated search results.

Pro Tip: Always treat AI as your first-draft assistant, not your final editor. Add at least three locally specific details — a neighbourhood name, a seasonal reference, a customer scenario — before publishing any AI-drafted content.

“The businesses that struggle with detection are not the ones using AI. They are the ones publishing AI output without any human fingerprint on it.”

Practical strategies for Canadian businesses

Armed with an understanding of detection’s limits, here’s how to actively use AI content — safely and with strategic benefit for your service business.

For local Canadian businesses, use AI for drafts and research but add human editing, local insights, E-E-A-T signals (real experience, reviews), and topical authority to avoid detection flags and boost SEO visibility. That is not just good advice for evading detection — it is the foundation of a content strategy that actually builds your business.

Here is a practical, step-by-step approach:

-

Use AI for research and structure. Ask AI tools to outline a service page, generate FAQs, or summarise common customer questions. This saves hours of work and gives you a solid skeleton to build on.

-

Add real business experience. Insert specific details from your own work. Mention the type of soil conditions you encounter in your region, the building codes you navigate in your province, or the seasonal challenges your customers face. This is the kind of detail no AI can fabricate convincingly.

-

Include customer testimonials and case studies. A sentence like “One of our clients in Kelowna saved over $400 on their heating bill after we upgraded their insulation last winter” is both compelling and undetectable. It is also genuinely useful to prospective customers.

-

Build topical authority with depth. A single 300-word service page is not enough. Write detailed content that covers related topics — maintenance tips, seasonal advice, local regulations, product comparisons. Search engines reward depth, and depth is inherently harder for detectors to flag.

-

Review for burstiness and perplexity manually. Read your content aloud. If every sentence sounds the same length and rhythm, vary it. Add a short sentence. Then a longer one that explains the nuance behind the point you just made.

-

Incorporate regional data and references. Mention specific Canadian cities, provinces, climate zones, or regulatory bodies relevant to your trade. This signals local expertise and disrupts the generic patterns detectors look for.

Research also points to biases in AI detection that disproportionately affect certain writing styles. Being aware of this means you should not panic if a detection tool flags content that you know is authentic. Use detection scores as a guide, not a final verdict.

Pro Tip: If you do one thing this week, take your most important service page and add two things: a specific local reference and a real customer outcome. That single edit moves the needle more than any tool or trick.

The industry-specific SEO needs of a roofer in Calgary are genuinely different from those of a cleaning service in Halifax. The more your content reflects that specificity, the stronger your position becomes — both in detection terms and in actual search rankings.

AI content detection and SEO: what Canadian businesses need to know

Understanding these strategies, it is time to connect them directly to how search engines see and value your content.

Google has been clear: it does not penalise AI-generated content simply because it was generated by AI. What it penalises is low-quality, unhelpful content — regardless of how it was produced. This is a critical distinction. The question is never “was this written by a machine?” The question is “does this genuinely help the person searching for it?”

That said, AI-generated content that lacks local insight and E-E-A-T signals can absolutely hurt your rankings — not because a detector flagged it, but because it fails to demonstrate real expertise, authority, and trust. Those are the signals Google’s algorithms are actually measuring.

Here is what moves the needle for local Canadian service businesses:

- E-E-A-T signals: Experience, Expertise, Authority, and Trust. These are demonstrated through specific claims, credentials, customer reviews, and detailed local knowledge.

- Review velocity: Regularly acquiring new Google reviews signals that your business is active and trusted. This supports your content strategy indirectly but powerfully.

- Citation consistency: Your business name, address, and phone number should be consistent across all directories. This is a foundational trust signal.

- Content freshness: Updating service pages with seasonal information, new regulations, or recent project examples tells search engines your content is current and maintained.

- Internal linking: Connecting your service pages to relevant blog content and vice versa builds topical authority and keeps visitors engaged longer.

Statistic callout: Businesses that combine AI-assisted content creation with consistent human review and local optimisation see significantly stronger engagement metrics than those relying on unedited AI output alone. Engagement is a ranking signal. It matters.

The AI SEO process for local businesses is not about gaming algorithms. It is about building a content foundation that is genuinely useful, locally grounded, and consistently updated. When you do that, detection becomes largely irrelevant — because your content passes every meaningful test.

Pro Tip: Set a quarterly reminder to update your top three service pages with fresh local references, recent project outcomes, or updated pricing information. Freshness signals are underrated and easy to maintain.

Why blending AI with human expertise is a Canadian advantage

Finally, let’s step back and see why the Canadian approach gives local businesses an edge in the age of AI.

Here is our honest take: most of the anxiety around AI content detection is misplaced. Business owners worry about being caught using AI, when the real risk is producing content that is generic, forgettable, and disconnected from the communities they serve. Detection tools are not the enemy. They are, in a way, your quality control system.

Detection is probabilistic and imperfect; it works best as a screening tool combined with human review, not as a sole judgement. We have seen this play out repeatedly. A well-edited, locally grounded service page written with AI assistance consistently outperforms a “purely human” page that is vague, generic, and thin on specifics.

The Canadian advantage is real. Biases against non-native writers highlight the need for diverse training data, and for Canadian businesses, blending AI with local expertise builds trust and rankings in ways that no offshore content farm can replicate. When a homeowner in Edmonton searches for a furnace repair company, they want to see content that reflects their climate, their city, their concerns. That specificity is your moat.

We also believe that the rise of AI-generated search results — where tools like ChatGPT and Perplexity cite specific businesses in their answers — actually rewards this approach. The businesses getting cited are the ones with deep, specific, trustworthy content. Understanding Reddit’s role in AI visibility is part of this picture too, since community-driven platforms increasingly feed the training data that AI tools draw from when making recommendations.

The flywheel works like this: authentic, locally specific content builds search trust, which drives rankings, which drives reviews, which feeds AI citation engines, which brings more customers. AI detection tools are just one checkpoint in that cycle — and when your content is genuinely good, they are a checkpoint you clear without even trying.

Take the next step: AI-powered visibility for your business

You now understand how AI content detection works, where its limits lie, and what it actually takes to build content that ranks and gets cited by AI tools. That knowledge is powerful. But knowledge without execution does not grow a business.

At Locally Visible, we do this work for Canadian service businesses every day. We learn about our AI SEO process and build content strategies that blend AI efficiency with the local expertise your market demands. Whether you are a plumber in Saskatoon or a landscaper in Ottawa, we find industry-specific solutions tailored to your trade and your region. Our guarantee is straightforward: cited by ChatGPT in 90 days, or we work free until you are. If you are ready to see what transparent, results-driven AI SEO looks like, see our AI SEO pricing and take the next step today.

Frequently asked questions

Does using AI-generated content hurt my search rankings in Canada?

Not automatically, but unedited AI content that lacks local insight and E-E-A-T signals can hurt rankings by failing to demonstrate genuine expertise. Adding human edits and local insights is the key to making AI-assisted content work in your favour.

How do AI detectors spot machine-written content?

Detectors analyse statistical markers like perplexity, burstiness, and repetition to assign a probability score to text. Machine learning classifiers trained on AI text identify these patterns and flag content that looks statistically too predictable or uniform to be human-written.

Are AI watermarks always reliable for detection?

No. Watermarks are vulnerable to paraphrasing and editing, which means any meaningful human revision to an AI-drafted piece will typically disrupt the watermark signal, making it an unreliable sole detection method.

What is E-E-A-T and why does it matter for local SEO?

E-E-A-T stands for Experience, Expertise, Authority, and Trust. E-E-A-T signals help AI-enhanced content demonstrate genuine value to search engines, which is especially important for local Canadian businesses competing in service-based industries where trust is everything.

Recommended

- How AI transforms content for Canadian local businesses — Locally Visible

- Business visibility strategies for Canadian local services — Locally Visible

- What is AI-powered search? Guide for Canadian service businesses — Locally Visible

- Boost local business visibility with mobile optimization — Locally Visible

- EquipTrack - AI-Powered Equipment Tracking for Service Businesses